AI agent memory: in-context, episodic, semantic, procedural

If your AI agent loses all context the moment a session ends, the reflex fix - "just add memory" - usually makes things worse, because there isn't one kind of memory to add. Agent memory splits into four distinct types - in-context, episodic, semantic, and procedural - and which one is missing depends entirely on what kind of forgetting you're actually seeing. Get the diagnosis wrong and you end up bolting all four on anyway, the agent still blanks in session three, and you've spent a week solving the wrong problem.

TL;DR

- Why does my AI agent forget everything between sessions? LLMs are stateless - every API call resets the context window to blank. Memory requires external systems built on top of the model; nothing persists automatically.

- What are the four types of agent memory? In-context (active during one inference), episodic (timestamped log of what happened), semantic (persistent facts retrieved via RAG), and procedural (behavioral rules baked into system prompts or versioned instruction files).

- Which type should I implement first? If your agent needs to recall past actions: episodic. If it needs to recall facts across sessions: semantic. If it needs consistent behavior: procedural. Most production agents need all three; start with whichever gap is causing failures today.

- Does a 1M-token context window make external memory unnecessary? No. Even a 1M-token window resets on every API call and suffers attentional dilution at high fill rates. External memory is non-optional for stateful agents, not a performance optimization.

- Which framework handles memory best out of the box? CrewAI gives the most batteries-included setup (SQLite for task outcomes + ChromaDB RAG for short-term). LangGraph gives maximum control via checkpointing + external vector DB integrations. AutoGen is the lightest but requires you to wire external storage yourself.

The Blank Slate Problem

You build an agent for a customer support flow. You test it for two hours: it remembers the user already tried basic troubleshooting, tracks which products they mentioned, holds the thread together. Works exactly how you wanted. You deploy it and run it the next morning on the same user account. It asks for their name.

This isn't a bug in your agent. It's how LLMs work.

Every call to an LLM API is stateless. The model has no access to your previous call, your user's history, or anything that happened outside the tokens you send right now. What feels like "memory" during a session is just the conversation history included in that single request's context window.

How context windows actually work (and what resets)

A context window is the full input the model sees during one inference call. Everything the agent "knows" about its current task has to fit in there: system prompt, conversation history, tool call results, retrieved documents.

When you end a session and start a new one, that context is gone. Not degraded, not partially retained. Gone. The next API call starts with whatever tokens you put in the request. If you don't explicitly include yesterday's conversation, the model has no access to it.

What's inside a typical agent's context window mid-session:

- System prompt - behavior rules, persona, tool definitions

- Conversation turns - the active thread of messages

- Tool outputs - results from function calls the agent made

- Retrieved content - documents fetched via search or RAG

All of this is rebuilt fresh on each call. None of it persists automatically.

Why 1M tokens doesn't solve it

The obvious workaround: stuff the entire conversation history into a huge context window and the agent effectively has memory. For a handful of sessions, that's partially true. Three problems kill it at any real scale:

- Context windows reset between sessions regardless of size. A 1M token window doesn't help if you're not explicitly reloading the history on each new session call. Nothing is retained by default.

- Attentional dilution is real. Long-context models handle information buried in the middle of a massive context less reliably than content near the start or end - the "lost in the middle" effect formalized by Liu et al., 2023. The model processes your 800K tokens of history; it doesn't weight them evenly.

- Cost compounds fast. At typical API pricing, a 1M-token context on every agent call is not a viable architecture - especially once you add multiple users or parallel threads. Prompt caching (Anthropic, OpenAI, Google) reduces this for the prefix portion that's stable across calls, but it's a discount on a flawed shape, not a fix for it.

That's why external memory isn't an optimization. For any agent that needs to maintain state across sessions, it's a prerequisite.

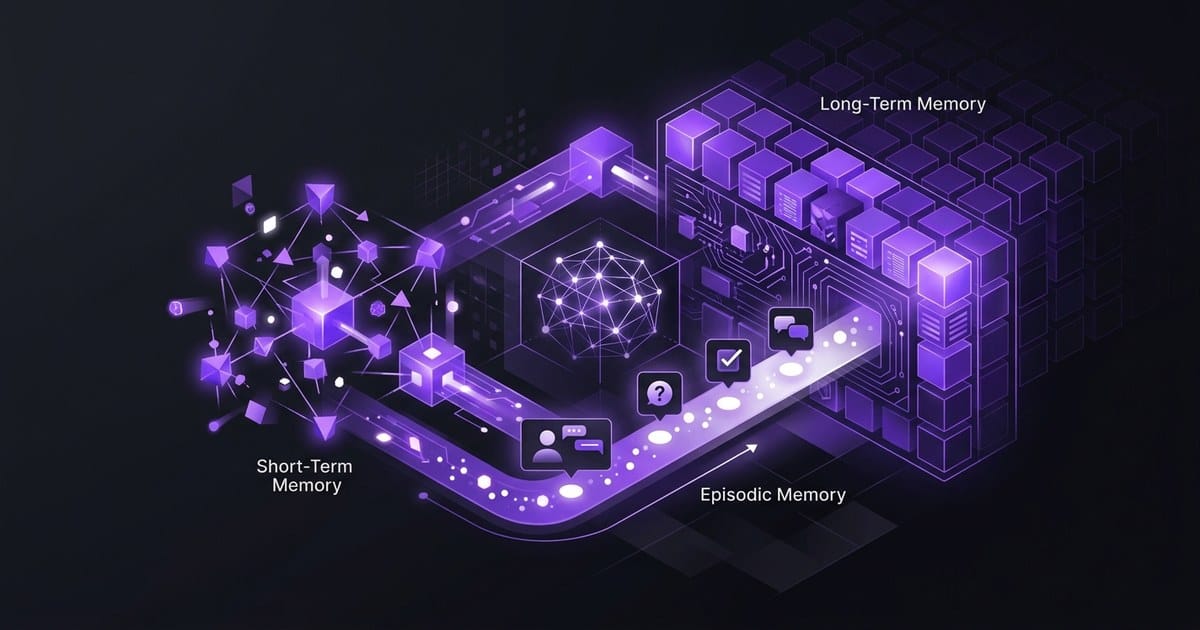

The Four Types of Agent Memory

There isn't one type of agent memory - there are four, and each maps to a different part of the statelessness problem. Developers who treat "agent memory" as a single thing to add usually bolt on the wrong one first, then wonder why the agent still goes blank in exactly the way that matters to their users.

Here's the taxonomy that holds up in practice, drawn from the CoALA (Cognitive Architectures for Language Agents) framework by Sumers et al. and how it maps to real implementations.

In-context: what's active right now

Think of this as the agent's working desk. Everything on the desk is immediately accessible. When the session ends, the desk gets swept clean.

In-context memory is the LLM's context window - every token passed to the API on each call. System prompt, conversation history, retrieved documents, tool outputs - if it's in the current request, it's in-context. No external storage required.

The catch: context windows reset with every API call. Even a 1M-token window still goes blank when the call ends. There's also a subtler problem - attentional dilution. Models attend less reliably to content buried deep in a long context. Larger windows help, but they don't solve the reset.

What in-context memory contains in a typical agent:

- Rolling conversation buffer (last N turns)

- System prompt with current task context

- Retrieved chunks injected from external stores per request

- Tool outputs from the current session

You already have this. Every agent you've built has in-context memory. The question is whether that's all it has.

Episodic: what happened and when

This is what your financial advisor uses when they say: "Last time we rebalanced, you moved out of tech right before the dip - should I apply the same logic now?" They're not just recalling a fact. They're recalling a specific event - a timestamp, a decision, an outcome.

For agents, episodic memory is a structured log of past interactions. Each entry looks something like:

{

"timestamp": "2026-04-15T09:32:00Z",

"task": "generate_monthly_report",

"inputs": {"period": "Q1 2026", "format": "pdf"},

"actions": ["fetch_data", "run_template", "render"],

"outcome": "success",

"notes": "PDF render failed on first attempt, fell back to HTML conversion"

}

At retrieval time, the agent queries this log - by date range, task type, or semantic similarity - and pulls relevant entries into context before the current call. Vector embeddings are what enable semantic retrieval: the agent can surface past episodes that are conceptually similar to the current task, not just exact keyword matches.

Episodic memory is what lets an agent say "we tried this last Tuesday and it failed in this specific way." Without it, every session starts from zero.

Semantic: what's persistently true

Semantic memory stores facts that are true independent of any specific interaction - things that should survive sessions without being tied to a timestamp or an event.

The simplest example: a user tells your agent "the dog's name is Henry." That should persist. It doesn't need a timestamp. It's not about what happened - it's about what's true.

In practice, semantic memory is an external knowledge base queried via RAG. Candidate facts get encoded as vector embeddings, stored in a vector database, and the most relevant ones get retrieved and injected into context per request.

Two things that break semantic memory in production:

- Curation failure - if you write everything without vetting, retrieval quality degrades fast. "The dog's name is Henry" is useful. "The user seemed slightly annoyed on April 3rd" probably isn't worth storing.

- Staleness - facts change. Knowledge bases need update and invalidation logic, not just write logic.

For a deeper look at how RAG retrieval works under the hood, the RAG vs fine-tuning vs better prompting decision tree covers the retrieval mechanics in detail.

Procedural: how to behave

Procedural memory is behavioral - not what the agent knows, but how it acts. It encodes rules, preferences, and patterns that should shape every output.

For agents, procedural memory lives in three places:

- System prompts - "always cite sources," "respond in under 200 words," "never mention competitor products"

- Source-controlled instruction files -

AGENTS.md,SOUL.md,MEMORY.mdpatterns checked into the repo alongside the code - Fine-tuned weights - the highest-investment option, baking behavior directly into the model

The strongest production setups treat procedural memory as infrastructure: behavioral rules live in version-controlled files, get code-reviewed like any other change, and get deployed like any other config. If a rule shapes every action the agent takes, it deserves the same change management as your codebase.

The difference between procedural and semantic memory is easy to confuse. A useful test: if you'd express it as an "is" statement ("the dog's name is Henry"), it's semantic. If you'd express it as a "should" statement ("the agent should always cite sources"), it's procedural.

Which Memory Type Do You Actually Need?

Most memory architecture mistakes happen before a single line of code is written. Developers reach for a vector database because they heard about RAG, or bolt on a message buffer because a tutorial showed one - without diagnosing what their agent is actually forgetting.

Answer four questions. They'll cut the decision space from four memory types to one or two.

The four-question diagnostic

Q1: Does your agent need to retain anything between sessions?

If your agent runs once, produces output, and is done - in-context memory is all you need. Every API call already gives you a context window; you're already using it. Stop here and go build.

If your agent needs to continue a relationship across sessions - same user, different days, ongoing tasks - you need external memory. Continue to Q2.

Q2: What does "remembering" mean for your use case?

Pick the description that fits your agent:

- Events and outcomes - "Last Tuesday it recommended Option A and it worked" - this is episodic memory

- Stable facts about users or domain - "User prefers formal English, budget cap is $500" - this is semantic memory

- Consistent behavioral rules - "Always cite sources, never guess names, follow this tone" - this is procedural memory

If more than one fits, note which feels most urgent. That's your first implementation target.

Q3: Does your agent need to reason about its own past actions?

If the agent should say "Last time we tried approach A and it failed, so this time I'll try B" - episodic memory is non-negotiable. A fact store won't help here; you need a timestamped event log with outcome tracking.

If the agent just needs to know facts independent of when or how it learned them, you can skip episodic entirely and go straight to semantic.

Q4: How much infrastructure can you add today?

Be honest about this one - "eventually" is not a cost tier.

- Zero infra tolerance: Procedural only - AGENTS.md or system prompt file under version control, no external database

- SQLite is acceptable: Episodic via a local event log, or lightweight semantic via a small embedded vector store

- You can run a managed vector DB: Full semantic retrieval or a hybrid episodic + semantic stack

Decision table: use case vs. memory type vs. cost tier

| Use case | Primary memory type | Implementation path | Est. monthly infra cost |

|---|---|---|---|

| Single-session assistant | In-context | Rolling context buffer, no external storage | $0 (tokens only) |

| Support bot with past-ticket context | Episodic | Timestamped event log + embedding retrieval | $5-20 |

| Personal assistant that knows user preferences | Semantic | RAG against a curated fact store (Chroma, Pinecone, Weaviate) | $15-40 |

| Code agent following project conventions | Procedural | AGENTS.md or SOUL.md under version control | $0 |

| Multi-session research agent | Semantic + Episodic | Vector DB + event log, separate retrieval paths | $30-80 |

| Customer-facing production agent | All four | Hybrid: in-memory cache + vector DB + event log + rules files | $80+ |

Cost tiers assume moderate usage (hundreds of queries per day) and exclude LLM token costs - only memory infrastructure.

One thing the table obscures: the $0 rows aren't free. Procedural memory stored in system prompts adds tokens to every call. A 2,000-token AGENTS.md file at 1,000 calls per day runs roughly $1-3 per day on Sonnet-class models - small, but not zero, and it compounds at scale. See the AI cost optimization guide if that math matters for your use case.

Start with the row that matches your agent today. Not where you think it's going in six months.

How Memory Gets Implemented

Each memory type maps to a different infrastructure layer. In-context runs in the request itself - a rolling buffer of recent messages, auto-truncated or summarized when it approaches the context limit. Episodic sits in an event log: structured records with timestamp + task + actions + outcome, embedded and indexed for retrieval.

Semantic memory is a RAG pipeline - chunk your knowledge base, embed it, store it in a vector DB, retrieve by similarity at query time. Procedural lives in the system prompt or a version-controlled instruction file (AGENTS.md, SOUL.md), loaded before every inference and shaping every response.

Snippet: rolling in-context memory with trim_messages

In-context is the easiest to manage - no external infrastructure, just the context window curated before each call. In current LangChain (0.3.x), the legacy ConversationBufferMemory and ConversationChain are deprecated; the recommended pattern is to maintain a message list yourself and pass it through trim_messages before each call. For state that survives process restarts, layer this on top of LangGraph checkpointing (shown later in this article).

# LangChain 0.3.x - tested 2026-05-11

from langchain_core.messages import HumanMessage, trim_messages

from langchain_openai import ChatOpenAI

llm = ChatOpenAI(model="gpt-4o-mini")

history: list = [] # persist this between turns yourself

def chat(user_input: str) -> str:

history.append(HumanMessage(content=user_input))

trimmed = trim_messages(

history,

max_tokens=4096,

strategy="last", # keep the most recent messages

token_counter=llm, # uses the model's tokenizer

include_system=True,

allow_partial=False,

)

response = llm.invoke(trimmed)

history.append(response)

return response.content

One watch-out: attentional dilution sets in well before you hit the token budget. For sessions longer than ~20 turns, test whether the model reliably surfaces early context. In practice, it often doesn't - which is the case for swapping strategy="last" for a summarization step, or for adding episodic retrieval on top.

Vector indexing: HNSW vs IVF vs FLAT

Once you add episodic or semantic memory, you pick a vector index. The wrong choice doesn't break at small scale - it gets slow or expensive later.

- HNSW (Hierarchical Navigable Small World, Malkov & Yashunin) - graph-based, high recall, low latency. The safe default for datasets under ~100M vectors. The

Mparameter (graph connectivity) is the main memory-vs-recall lever:M=16-32adds roughly 20-60% overhead over the raw vectors and is fine for most workloads;M=64-128pushes recall up at 1.5-2x the memory footprint. Most semantic and episodic retrieval use cases land here. - IVF (Inverted File Index) - cluster-based, memory-efficient. Scales to hundreds of millions of vectors; lower recall than HNSW unless you tune

nprobe. Right choice when your memory store is large and you can tolerate a one-time training step. - FLAT - brute-force exact search, perfect recall. The real cutoff is latency tolerance, not vector count: a 50K x 1536-dim scan runs in ~30-50ms on modern CPU, 500K runs in ~300ms (fine for batch, painful for interactive chat). Useful for procedural stores or small curated fact bases where accuracy is non-negotiable.

The hybrid architecture pattern

No single backend handles all four memory types well. Production agents end up with a stack:

├── In-memory cache → in-context (session state, rolling buffer)

├── Event log → episodic (append-only, timestamped, immutable)

├── Vector DB → semantic + episodic retrieval (RAG pipeline)

└── Version-controlled files → procedural (AGENTS.md / SOUL.md, loaded at startup)

The event log is append-only by design - you never mutate a past event, you add a new one. That immutability is what makes episodic memory trustworthy for audit trails and "last time you ran this task, here's what happened" retrieval.

In smaller systems, a single Chroma or Qdrant instance often covers both semantic and episodic search. Graph databases appear in more complex stacks when the agent needs to reason across entity relationships - tracking that a specific user mentioned a specific project connected to a specific budget. If you're not doing relationship reasoning, skip the graph layer.

Framework Comparison: LangGraph vs. CrewAI vs. AutoGen

Most developers pick a framework before they think about memory. That's fine - but each framework ships different defaults, has a different customization ceiling, and requires different amounts of external wiring. Getting the mental model wrong here means retrofitting memory into a framework that wasn't designed for your use case.

Here's what each actually gives you:

| Framework | Long-term default | Short-term default | Customization ceiling |

|---|---|---|---|

| CrewAI | SQLite3 for task outcomes (automatic) | ChromaDB RAG (automatic) | Medium - opinionated, limited override |

| LangGraph | None - you wire it | Checkpointed state per thread | High - you control everything |

| AutoGen | None | Conversation message list | Medium - external storage, developer-managed |

CrewAI is batteries-included. LangGraph is a blank canvas. AutoGen sits between them - lightweight by design, requiring explicit developer management for anything beyond the current message list.

A category worth knowing about, even if you stay in one of the above: purpose-built memory products like Mem0, Zep, Letta, and LangMem sit one layer below orchestrators. They don't run your agent loop; they own the memory layer - extraction, deduplication, retrieval, decay - and expose APIs that LangGraph, CrewAI, AutoGen, and custom stacks can call. For agents where memory quality is the product (personal assistants, support bots with long user histories), bolting one of these on tends to beat building the equivalent in-house against a raw vector DB.

Example: CrewAI memory init (SQLite + RAG)

CrewAI's memory system activates with a single flag. SQLite stores task results and outcomes - closer to episodic memory in CoALA's taxonomy than to a general fact store - and ChromaDB handles short-term retrieval via RAG. You don't write the plumbing. The CrewAI memory docs walk through the entity memory layer too.

Two configuration gotchas that aren't in the headline example: (1) memory=True defaults to OpenAI embeddings - if OPENAI_API_KEY isn't set and no embedder= override is configured, the crew will run normally until the first memory operation, then fail mid-task. Pass embedder={"provider": "...", "config": {...}} explicitly for any non-OpenAI setup. (2) The local SQLite plus local ChromaDB combo doesn't tolerate parallel crews against the same storage well - concurrent writes surface as database is locked errors before they surface as slow queries. If you're running more than one crew worker, that pair is not the right backend.

# CrewAI 0.28.x - tested 2026-04-15

from crewai import Crew, Agent, Task

researcher = Agent(

role="Research Analyst",

goal="Find relevant information on {topic}",

backstory="Expert researcher with strong analytical skills.",

verbose=True

)

research_task = Task(

description="Research the topic: {topic}",

agent=researcher,

expected_output="A detailed report on {topic}"

)

crew = Crew(

agents=[researcher],

tasks=[research_task],

memory=True, # enables SQLite task-outcome store + ChromaDB RAG

verbose=True

)

result = crew.kickoff(inputs={"topic": "agent memory architectures"})

This setup works immediately. The tradeoff: you can't easily swap ChromaDB for Pinecone or redirect SQLite to Postgres without forking CrewAI internals. For projects where the defaults fit, it's fast to ship. For production systems with specific infrastructure requirements, you'll feel the constraint early.

Example: LangGraph checkpointing config

LangGraph persists state through checkpointers. It ships with InMemorySaver for development and first-class support for external backends. Two gotchas worth flagging up-front, since the official examples don't lead with them:

- The SQLite saver lives in a separate package -

pip install langgraph-checkpoint-sqlite. SqliteSaver.from_conn_string()is a context manager, not a constructor. Passing its return value straight intocompile()fails withTypeError: Invalid checkpointer. For long-lived processes, instantiate it directly from asqlite3connection instead.

# LangGraph 1.x - verified 2026-05-11

import sqlite3

from langgraph.graph import StateGraph

from langgraph.checkpoint.sqlite import SqliteSaver

from typing import TypedDict

class AgentState(TypedDict):

messages: list

memory_context: str

def process_node(state: AgentState) -> AgentState:

# agent logic here

return state

graph_builder = StateGraph(AgentState)

graph_builder.add_node("process", process_node)

graph_builder.set_entry_point("process")

graph_builder.set_finish_point("process")

# Long-lived process: own the connection, then own the saver.

conn = sqlite3.connect("checkpoints.sqlite", check_same_thread=False)

checkpointer = SqliteSaver(conn)

# Short-lived script (alternative): use the context-manager form.

# with SqliteSaver.from_conn_string("checkpoints.sqlite") as checkpointer:

# graph = graph_builder.compile(checkpointer=checkpointer)

graph = graph_builder.compile(checkpointer=checkpointer)

config = {"configurable": {"thread_id": "user-session-001"}}

result = graph.invoke({"messages": [], "memory_context": ""}, config=config)

thread_id is the key mechanism - it namespaces state per conversation, per user, or per organization. For B2B agents, scope it as {tenant_id}:{user_id}:{session_id} so per-user memory can't leak across customers.

Swapping SqliteSaver for AsyncPostgresSaver is a real change, not a drop-in. Async savers expect async nodes, an async-aware lifespan to manage the connection pool, and JSON-serializable state. For Postgres in production, plan to touch your node signatures and your framework lifespan hooks too - the official LangGraph tutorial covers the FastAPI lifespan pattern in detail.

When to pick each

The decision depends less on feature lists and more on how much you want to own:

- CrewAI - best when the default SQLite + RAG setup fits your use case and you don't need infrastructure control. Fastest to ship; hardest to customize under the hood.

- LangGraph - best when you have specific memory backends, need fine-grained control over what gets persisted, or are building a system that needs to scale. More initial wiring; no hidden constraints later.

- AutoGen - best for research, prototyping, and conversational agents where you want to manage memory explicitly. You write the memory logic yourself - more work, more transparency about what's actually stored.

One production note worth flagging: CrewAI's automatic ChromaDB runs as embedded, single-node vector storage. Per Chroma's own docs, single-node deployments are comfortable up to roughly tens of millions of embeddings - that covers most agent memory workloads. The wall you hit first is rarely raw vector count; it's QPS, high-availability replication, or running more than one worker against the same store. When those are your bottleneck, LangGraph with a managed Pinecone, Weaviate, or Qdrant cluster swaps in cleanly. CrewAI's defaults don't.

A Three-File Pattern Worth Stealing

Before you reach for a vector DB, a lighter pattern handles most agents that aren't yet at scale. Three plain-text files, three jobs - no managed embeddings, no specialist to maintain it.

Episodic memory runs as daily standup logs - plain text files, one per day, timestamped. Each file captures what tasks ran, what the agent decided, and what happened. Retrieval is a date-range query. Want to know what the agent did last Tuesday? grep works. Want to check if the agent made the same mistake three weeks ago? Scroll back through the log files. Episodic memory works on timestamps first, semantics second - and for most use cases, that's enough.

Semantic memory is a MEMORY.md file - a curated list of lasting truths about the project, the user, and the system. Not a conversation dump. Someone consciously decides what goes in: "user prefers short answers," "Ghost API requires ?source=html," "DB schema changed in v2." That curation step is the whole point. Without it, you end up with a long file nobody trusts.

Procedural memory is AGENTS.md (and optionally SOUL.md), checked into version control. These are the behavioral rules that shape how the agent operates. They live in git so that when behavior changes, you get a diff, not a mystery. If an agent starts acting differently and you want to know why, git blame is a legitimate debugging tool.

Three files, three jobs:

- Standup logs: what happened

- MEMORY.md: what's permanently true

- AGENTS.md / SOUL.md: how to behave

This isn't the stack you'd run at scale. But it's what you can stand up today, on your laptop, without waiting on infrastructure. In-context memory (the context window) handles what's happening right now. Everything else lives in plain text files. Readable, grep-able, committable alongside your code.

The vector DB comes later - when you have enough episodic data that grep stops cutting it, when MEMORY.md grows too large to inject whole, when retrieval latency actually matters. Cross that bridge when you get there. Most projects don't.

What Breaks in Practice

Three failure modes appear after the demo and before the next production deploy. Vendor blogs skip these.

Stale semantic stores

Semantic memory degrades silently. You add facts to your vector store, the agent retrieves them with high confidence - and the facts are six months out of date. The failure isn't "retrieval failed." It's "retrieval succeeded on wrong data."

Two contradicting embeddings at similar similarity scores don't cancel out. They produce confident, averaged-out wrong answers.

Concrete mitigations:

- Timestamp every embedding. Store created_at and optionally valid_until metadata alongside each vector at write time.

- Filter at retrieval, not just at write. Pure cosine similarity is blind to recency. Apply metadata filters (valid_until > now, superseded = false) before the ANN search picks winners, or add a re-ranking step that scores by similarity x recency rather than similarity alone. This is the single most impactful production fix and gets skipped most often.

- Delete on update, don't append. When a fact changes, supersede the old embedding. Appending a correction next to the original leaves both in rotation, and the LLM tends to reconcile them by averaging - which is how confidently wrong answers happen.

- Adversarial retrieval tests. Before deploying updates, query the store with questions about something you know changed 6 months ago. If the agent returns confident wrong answers, your pruning cadence is too slow.

Runaway episodic logs

Episodic memory feels safe to accumulate - it's just timestamped history. The problem shows up at scale. A pipeline logging 500 entries per day exceeds 180,000 entries in a year. FLAT vector indexes scale linearly with entry count; HNSW graph connections degrade when they spill into cold storage. Latency becomes visible before the six-month mark.

Four ways to control it:

- Rolling window with a hard cap. Keep full episodic entries for 60-90 days; collapse older sessions into summarized semantic facts.

- Hot/cold index split. Recent episodes in a small fast index; archived summaries in a separate index queried only when the hot index yields low confidence.

- Prune at write time, not read time. Filtering during retrieval still pays the full search cost. Prune in the write pipeline before entries hit the index.

- Plan for deletion from day one. Append-only is the right default operationally, but it collides with GDPR's right-to-be-forgotten and equivalent regimes the moment your agent logs identifiable user data. Practical fixes: tombstone records with a deleted_at flag plus a periodic compaction pass, or partition the log by user_id so a deletion request is a partition drop. Encryption-at-rest with per-user keys you can revoke is the heavier alternative. None of these are free - decide which one applies before the first compliance review, not after.

Procedural drift

Procedural rules - system prompts, AGENTS.md, SOUL.md - are the easiest memory type to set up and the hardest to keep accurate. Agent behavior evolves through prompt iteration and tool updates; the procedural specifications describing how the agent should behave get updated less often.

The result is a diagnostic gap: when something breaks in production, you're debugging observed behavior against stale specs. Two things look identical on paper but diverge in practice.

One cheap fix: feed your current AGENTS.md plus 10 recent conversation logs to a second LLM and ask it to flag contradictions between stated rules and observed behavior. A one-shot prompt against a small model costs under $0.02 per check - wire it into your deployment pipeline rather than running it manually when something already broke.

What This Doesn't Cover

Three topics that deserve their own articles, flagged so readers know what's not in this one:

- Memory write triggers - deciding when something is worth persisting. "Always write" blows up storage; "write on explicit user instruction" misses 90% of useful facts. Heuristic-based extraction, LLM-judged salience, and confidence thresholds are all live techniques and worth their own treatment.

- Memory evals - measuring whether a memory layer is actually helping. Retrieval precision@k, contradiction-detection rates, recency-weighted accuracy, and end-to-end task success with vs. without memory are all standard. Without these, you can't tell if a memory change made the agent smarter or just chattier.

- Tenancy and isolation at depth - per-user vs per-organization scoping, sharing across roles, audit trails, and the access-control story for shared facts. The

thread_idmention earlier is the entry point, not the full picture.